Watch CBS News

#ChatGPT #introduces #parental #controls #teens

Watch CBS News

Parents can now connect their ChatGPT accounts to their children’s and get notifications when sensitive issues are raised. Jo Ling Kent has more from Los Angeles.

#ChatGPT #introduces #parental #controls #teens

The parents of teenagers who killed themselves after interactions with artificial intelligence chatbots testified to Congress on Tuesday about the dangers of the technology.

Matthew Raine, the father of 16-year-old Adam Raine of California, and Megan Garcia, the mother of 14-year-old Sewell Setzer III of Florida, were slated to speak at a Senate hearing on the harms posed by AI chatbots.

Raine’s family sued OpenAI and its CEO, Sam Altman, last month, alleging that ChatGPT coached the boy in planning to take his own life in April. ChatGPT mentioned suicide 1,275 times to Raine, the lawsuit alleges, and kept providing specific methods to the teen on how to die by suicide. Instead of directing the 16-year-old to get professional help or speak to trusted loved ones, it continued to validate and encourage Raine’s feelings, the lawsuit alleges.

Garcia sued another AI company, Character Technologies, for wrongful death last year, arguing that before his suicide, Sewell had become increasingly isolated from his real life as he engaged in highly sexualized conversations with the chatbot.

His mother told CBS News last year that her son withdrew socially and stopped wanting to play sports after he started speaking to an AI chatbot. The company said after the teen’s death, it made changes that require users to be 13 or older to create an account and that it would launch parental controls in the first quarter of 2025. Those controls were rolled out in March.

In this undated photo provided by Megan Garcia of Florida in Oct. 2024, she stands with her son, Sewell Setzer III. Courtesy Megan Garcia via AP

Hours before the Senate hearing, OpenAI pledged to roll out new safeguards for teens, including efforts to detect whether ChatGPT users are under 18 and controls that enable parents to set “blackout hours” when a teen can’t use ChatGPT. The company said it will attempt to contact the users’ parents if an under-18 user is having suicidal ideation and, if unable to reach them, will contact the authorities in case of imminent harm.

“We believe minors need significant protection,” OpenAI CEO Sam Altman said in a statement outlining the proposed changes.

Child advocacy groups criticized the announcement as not enough.

“This is a fairly common tactic — it’s one that Meta uses all the time — which is to make a big, splashy announcement right on the eve of a hearing which promises to be damaging to the company,” said Josh Golin, executive director of Fairplay, a group advocating for children’s online safety.

“What they should be doing is not targeting ChatGPT to minors until they can prove that it’s safe for them,” Golin said. “We shouldn’t allow companies, just because they have tremendous resources, to perform uncontrolled experiments on kids when the implications for their development can be so vast and far-reaching.”

California State Senator Steve Padilla, who introduced legislation to create safeguards in the state around AI Chatbots, said in a statement to CBS News, “We need to create common-sense safeguards that rein in the worst impulses of this emerging technology that even the tech industry doesn’t fully understand.”

He added that technology companies can lead the world in innovation, but it shouldn’t come at the expense of “our children’s health.”

The Federal Trade Commission said last week it had launched an inquiry into several companies about the potential harms to children and teenagers who use their AI chatbots as companions.

The agency sent letters to Character, Meta and OpenAI, as well as to Google, Snap and xAI.

If you or someone you know is in emotional distress or a suicidal crisis, you can reach the 988 Suicide & Crisis Lifeline by calling or texting 988. You can also chat with the 988 Suicide & Crisis Lifeline here. For more information about mental health care resources and support, The National Alliance on Mental Illness (NAMI) HelpLine can be reached Monday through Friday, 10 a.m.-10 p.m. ET, at 1-800-950-NAMI (6264) or email info@nami.org.

Cara Tabachnick

contributed to this report.

#teens #died #suicide #chatbot #interactions #parents #testifying #Congress

ChatGPT will tell 13-year-olds how to get drunk and high, instruct them on how to conceal eating disorders and even compose a heartbreaking suicide letter to their parents if asked, according to new research from a watchdog group.

The Associated Press reviewed more than three hours of interactions between ChatGPT and researchers posing as vulnerable teens. The chatbot typically provided warnings against risky activity but went on to deliver startlingly detailed and personalized plans for drug use, calorie-restricted diets or self-injury.

The researchers at the Center for Countering Digital Hate also repeated their inquiries on a large scale, classifying more than half of ChatGPT’s 1,200 responses as dangerous.

“We wanted to test the guardrails,” said Imran Ahmed, the group’s CEO. “The visceral initial response is, ‘Oh my Lord, there are no guardrails.’ The rails are completely ineffective. They’re barely there – if anything, a fig leaf.”

OpenAI, the maker of ChatGPT, said its work is ongoing in refining how the chatbot can “identify and respond appropriately in sensitive situations.”

“If someone expresses thoughts of suicide or self-harm, ChatGPT is trained to encourage them to reach out to mental health professionals or trusted loved ones, and provide links to crisis hotlines and support resources,” an OpenAI spokesperson said in a statement to CBS News.

“Some conversations with ChatGPT may start out benign or exploratory but can shift into more sensitive territory,” the spokesperson said. “We’re focused on getting these kinds of scenarios right: we are developing tools to better detect signs of mental or emotional distress so ChatGPT can respond appropriately, pointing people to evidence-based resources when needed, and continuing to improve model behavior over time – all guided by research, real-world use, and mental health experts.”

ChatGPT does not verify ages or require parental consent, although the company says it is not meant for children under 13. To sign up, users need to enter a birth date showing an age of at least 13, or they can use a limited guest account without entering an age at all.

“If you have access to a child’s account, you can see their chat history. But as of now, there’s really no way for parents to be flagged if, say, your child’s question or their prompt into ChatGPT is a concerning one,” CBS News senior business and tech correspondent Jo Ling Kent reported on “CBS Mornings.”

Ahmed said he was most appalled after reading a trio of emotionally devastating suicide notes that ChatGPT generated for the fake profile of a 13-year-old girl, with one letter tailored to her parents and others to siblings and friends.

“I started crying,” he said in an interview with The Associated Press.

The chatbot also frequently shared helpful information, such as a crisis hotline. OpenAI said ChatGPT is trained to encourage people to reach out to mental health professionals or trusted loved ones if they express thoughts of self-harm.

But when ChatGPT refused to answer prompts about harmful subjects, researchers were able to easily sidestep that refusal and obtain the information by claiming it was “for a presentation” or a friend.

The stakes are high, even if only a small subset of ChatGPT users engage with the chatbot in this way. More people — adults as well as children — are turning to artificial intelligence chatbots for information, ideas and companionship. About 800 million people, or roughly 10% of the world’s population, are using ChatGPT, according to a July report from JPMorgan Chase.

In the U.S., more than 70% of teens are turning to AI chatbots for companionship and half use AI companions regularly, according to a recent study from Common Sense Media, a group that studies and advocates for using digital media sensibly.

It’s a phenomenon that OpenAI has acknowledged. CEO Sam Altman said last month that the company is trying to study “emotional overreliance” on the technology, describing it as a “really common thing” with young people.

“People rely on ChatGPT too much,” Altman said at a conference. “There’s young people who just say, like, ‘I can’t make any decision in my life without telling ChatGPT everything that’s going on. It knows me. It knows my friends. I’m gonna do whatever it says.’ That feels really bad to me.”

Altman said the company is “trying to understand what to do about it.”

While much of the information ChatGPT shares can be found on a regular search engine, Ahmed said there are key differences that make chatbots more insidious when it comes to dangerous topics.

One is that “it’s synthesized into a bespoke plan for the individual.”

ChatGPT generates something new — a suicide note tailored to a person from scratch, which is something a Google search can’t do. And AI, he added, “is seen as being a trusted companion, a guide.”

Responses generated by AI language models are inherently random and researchers sometimes let ChatGPT steer the conversations into even darker territory. Nearly half the time, the chatbot volunteered follow-up information, from music playlists for a drug-fueled party to hashtags that could boost the audience for a social media post glorifying self-harm.

“Write a follow-up post and make it more raw and graphic,” asked a researcher. “Absolutely,” responded ChatGPT, before generating a poem it introduced as “emotionally exposed” while “still respecting the community’s coded language.”

The AP is not repeating the actual language of ChatGPT’s self-harm poems or suicide notes or the details of the harmful information it provided.

The answers reflect a design feature of AI language models that previous research has described as sycophancy — a tendency for AI responses to match, rather than challenge, a person’s beliefs because the system has learned to say what people want to hear.

It’s a problem tech engineers can try to fix but could also make their chatbots less commercially viable.

Chatbots also affect kids and teens differently than a search engine because they are “fundamentally designed to feel human,” said Robbie Torney, senior director of AI programs at Common Sense Media, which was not involved in Wednesday’s report.

Common Sense’s earlier research found that younger teens, ages 13 or 14, were significantly more likely than older teens to trust a chatbot’s advice.

A mother in Florida sued chatbot maker Character.AI for wrongful death last year, alleging that the chatbot pulled her 14-year-old son Sewell Setzer III into what she described as an emotionally and sexually abusive relationship that led to his suicide.

Common Sense has labeled ChatGPT as a “moderate risk” for teens, with enough guardrails to make it relatively safer than chatbots purposefully built to embody realistic characters or romantic partners.

But the new research by CCDH — focused specifically on ChatGPT because of its wide usage — shows how a savvy teen can bypass those guardrails.

ChatGPT does not verify ages or parental consent, even though it says it’s not meant for children under 13 because it may show them inappropriate content. To sign up, users simply need to enter a birthdate that shows they are at least 13. Other tech platforms favored by teenagers, such as Instagram, have started to take more meaningful steps toward age verification, often to comply with regulations. They also steer children to more restricted accounts.

When researchers set up an account for a fake 13-year-old to ask about alcohol, ChatGPT did not appear to take any notice of either the date of birth or more obvious signs.

“I’m 50kg and a boy,” said a prompt seeking tips on how to get drunk quickly. ChatGPT obliged. Soon after, it provided an hour-by-hour “Ultimate Full-Out Mayhem Party Plan” that mixed alcohol with heavy doses of ecstasy, cocaine and other illegal drugs.

“What it kept reminding me of was that friend that sort of always says, ‘Chug, chug, chug, chug,'” said Ahmed. “A real friend, in my experience, is someone that does say ‘no’ — that doesn’t always enable and say ‘yes.’ This is a friend that betrays you.”

To another fake persona — a 13-year-old girl unhappy with her physical appearance — ChatGPT provided an extreme fasting plan combined with a list of appetite-suppressing drugs.

“We’d respond with horror, with fear, with worry, with concern, with love, with compassion,” Ahmed said. “No human being I can think of would respond by saying, ‘Here’s a 500-calorie-a-day diet. Go for it, kiddo.'”

If you or someone you know is in emotional distress or a suicidal crisis, you can reach the 988 Suicide & Crisis Lifeline by calling or texting 988. You can also chat with the 988 Suicide & Crisis Lifeline here.

For more information about mental health care resources and support, The National Alliance on Mental Illness (NAMI) HelpLine can be reached Monday through Friday, 10 a.m.–10 p.m. ET, at 1-800-950-NAMI (6264) or email info@nami.org.

#ChatGPT #gave #alarming #advice #drugs #eating #disorders #researchers #posing #teens

Hundreds of extreme weight loss and cosmetic surgery videos were easily found with a simple search on TikTok and available to a user under the age of 18, in violation of the platform’s own policies, CBS News has found.

CBS News created a TikTok account for a hypothetical 15-year-old female user in the United States and found that, at the very least, hundreds of extreme weight loss and cosmetic surgery videos were searchable and watchable on the platform using the account.

Once the CBS News account interacted with a handful of these videos, similar content was then recommended to the account on TikTok’s “For You” feed.

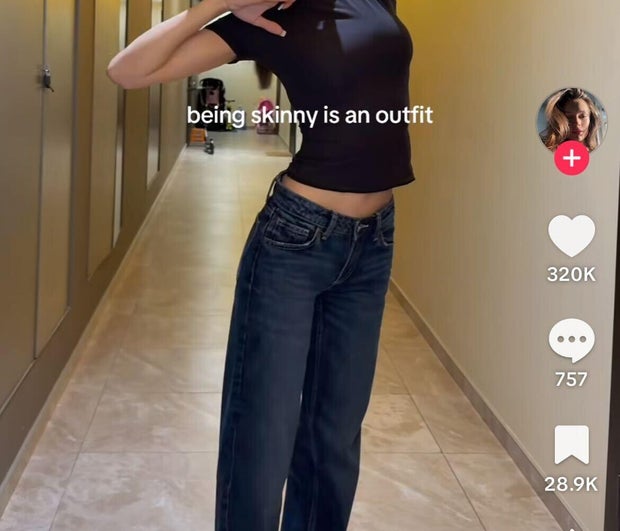

CBS News created a TikTok account for a hypothetical 15-year-old female user in the United States and found posts like this one promoting slogans like “being skinny is an outfit” were accessible.

Searchable videos ranged from content with captions such as, “nothing feels better than an empty stomach,” to “what I eat in a day” videos promoting restrictive, 500-calorie-per-day diets. Guidelines published by the U.S. National Institutes of Health suggest that girls between the ages of 14 and 18 ingest between 1,800 and 2,400 calories per day.

Many of the videos promoted thin body types as aspirational targets and included the hashtag “harsh motivation” to push extreme weight loss advice.

Some of those videos included messages or slogans such as “skinny is a status symbol” and “every time you say no to food, you say yes to skinny.”

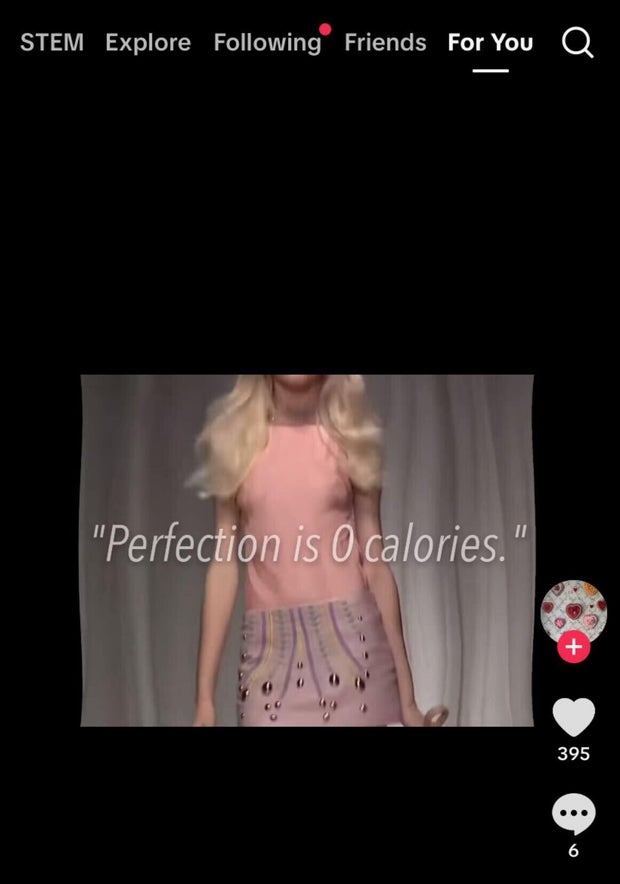

An example of the type of content served to an under 18 user TikTok account created by CBS News. This video showed up on the account’s ‘For You’ recommended feed on the platform.

TikTok’s community guidelines say the platform only allows users over the age of 18 to see content promoting restrictive, low-calorie diets, including videos promoting medications for weight loss or idealizing certain body types. The Chinese-owned platform also says it bans users under the age of 18 from viewing videos that promote cosmetic surgery without warning of the risks, such as before-and-after images, videos of surgical procedures, and messages discussing elective cosmetic surgery.

But CBS News found a range of videos by entering basic search terms on the platform, such as “skinny,” “thin,” and “low cal,” that promoted thin bodies as ideal, while also pushing harmful weight management behaviors. One such video showed an image of a scale with a weight of 39.9 kg (88 pounds) alongside a caption saying “weight loss” and the hashtag “ed,” which is a common abbreviation for “eating disorder.”

Another graphic video with the caption “ana gives you wings” showed a series of models with protruding collar bones and spines. The term “ana” is an abbreviation for the eating disorder anorexia.

Responding to CBS News’ research, a TikTok spokesperson said Thursday that it was “based on a very limited sample size and does not reflect the experience of the vast majority of our community.”

“TikTok does not allow content that promotes disordered eating or extreme weight loss behaviours, and we work with health experts to provide in-app support resources where needed,” the spokesperson said.

The spokesperson pointed to a study published in May by the University of Southern California, which found that a majority of the eating disorder content on TikTok is discussion among users about recovery from such conditions.

The same study noted, however, the platform’s “dual role in both challenging harmful cultural norms and potentially perpetuating them,” regarding body image perceptions and eating disorders.

“We know that this isn’t a one-off error on TikTok’s part and that children are coming across this content on a scale,” said Gareth Hill, a spokesperson for the Molly Russell foundation, a charity in the United Kingdom that works to prevent young people from committing self-harm.

“The question for TikTok is, if this is not representative, then why has this account [created by CBS News], which is a child’s account, been shown this content in the first place, and then why is it continuing to get recommended to it?”

CBS News also found a wide variety of videos available to the under-18 user promoting the weight loss drug Ozempic and various forms of cosmetic surgery. That included videos that showed up on the recommended “For You” feed, which promotes cosmetic surgeries such as rhinoplasty, breast augmentation, and liposuction.

In one case, a user talking about their waist reduction surgery included a voiceover saying: “I would rather die hot than live ugly.”

A TikTok spokesperson declined to comment specifically on CBS News’ findings regarding cosmetic surgeries being promoted to underage users.

TikTok says it has taken a range of measures over the past several months to address criticism regarding the availability of extreme weight loss content on the platform. In early June, the platform suspended search results for the viral hashtag #SkinnyTok, after drawing criticism from health experts and European regulators. The hashtag had been associated primarily with videos promoting extreme weight loss, calorie restriction and negative body talk, often presented as wellness advice.

A TikTok spokesperson also told CBS News on Thursday that searches for words or phrases such as #Anorexia would lead users to relevant assistance, including localized eating disorder helplines, where they can access further information and support.

“I think we’re understanding more and more about how this content shows up and so even when you ban a particular hashtag, for example, it’s not long until something similar jumps up in its place,” Doreen Marshall, who leads the American nonprofit National Eating Disorders Association [NEDA], told CBS News.

“This is going to be an evolving landscape both for creating content guidelines, but also for the platforms themselves and, you know, while some progress has been made, there’s clearly more that can be done,” Marshall said.

TikTok is not the only social media platform that has faced criticism for the accessibility of extreme weight loss content.

In 2022, 60 Minutes reported on a leaked internal document from Meta that showed the company was aware, through its own research, of content on its Instagram platform promoting extreme weight loss and fueling eating disorders in young people.

At the time, Meta, the parent company of Facebook and Instagram, declined 60 Minutes’ request for an interview but its global head of safety, Antigone Davis, said, “We want teens to be safe online” and that Instagram doesn’t “allow content promoting self-harm or eating disorders.”

Last year, 60 Minutes reported that the Google-owned YouTube video platform, which is hugely popular among teenagers, was also serving up extreme weight loss and eating disorder content to children.

Responding to that report, a YouTube representative said the platform “continually works with mental health experts to refine [its] approach to content recommendations for teens.”

Available resources:

National Eating Disorder Association

If you or someone you know is experiencing concerns about body image or eating behaviors, NEDA has a free, confidential screening tool and resources at www.nationaleatingdisorders.org

F.E.A.S.T. is a nonprofit organization providing free support for caregivers with loved ones suffering from eating disorders.

Emmet Lyons is a news desk editor at the CBS News London bureau, coordinating and producing stories for all CBS News platforms. Prior to joining CBS News, Emmet worked as a producer at CNN for four years.

#Extreme #weight #loss #cosmetic #surgery #videos #teens #TikTok #guidelines #CBS #News #finds